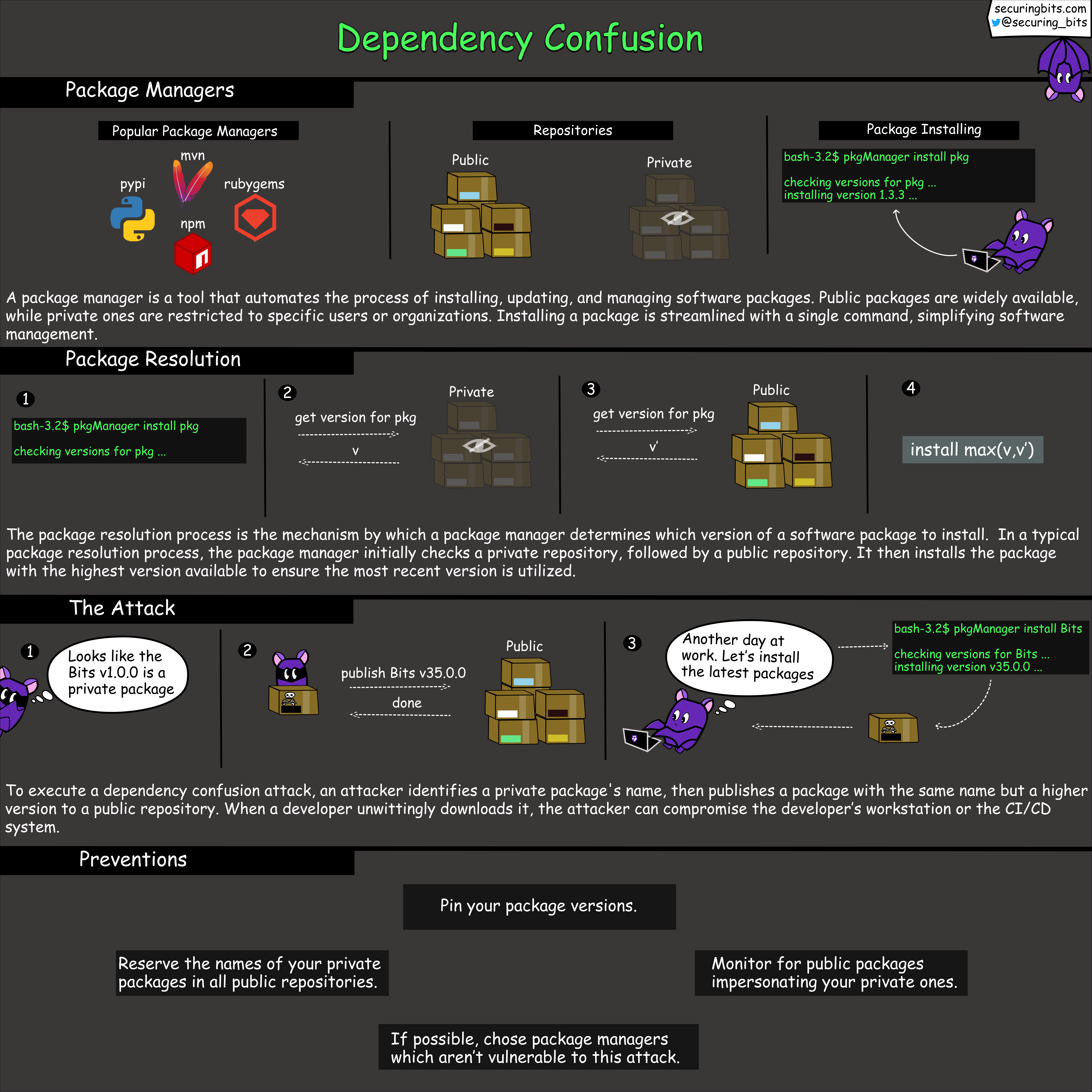

Dependency Confusion Attack

Everything I do professionally is around helping engineers create amazing applications that are both secure and reliable. That’s why I build engineering tools and educational content that simplify application security.

Throughout my career, I have performed security audits for private and open-source projects, and have found critical vulnerabilities in Google and Mozilla products. I have also taught security to hundreds of engineers and students, while I have also been an external lecturer and Ph.D. candidate in computer science at the Technical University of Denmark.

Here are some of the things I’m working on right now:

- Developing a tool 🛠️ that helps software engineers build applications which comply with privacy requirements

- Creating weekly educational content on application security using comic art 🦇

- Creating a blog 📝 on security at securingbits.com

If you’re interested in learning more about application security, I’d love to hear from you. Feel free to send me a message, and make sure to follow me so I can make security easy for you 🙂

Ever wondered how attackers carry out dependency confusion 🤔 attacks?